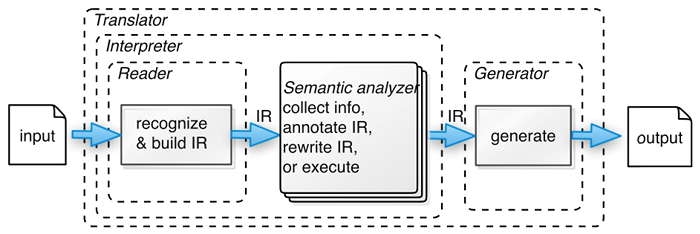

class: center, middle # Lexical Analysis _CMPU 331 - Compilers_ --- # Lexical Analysis The first stage of compilation  (source: _Language Implementation Patterns_ by Terence Parr) --- # Lexical Analysis The first stage of compilation ``` (+ 3 4) ``` * The input is just a string of characters * Split it into substrings, called tokens --- # What is a Token? A word in a particular "syntactic" category * In English: noun, verb, adjective ... * In a programming language: identifier, integer, operator... --- # Tokens Tokens correspond to sets of strings * Integer: a non-empty string of digits * Identifier: string of letters or digits, starting with a letter * Operator: "+", "-"... * Keyword: "if", "else" ... * Whitespace: a non-empty sequence of spaces, tabs, newlines... --- # Tokens * Classify the substrings in a program * Output of lexer is a stream of tokens... * ...tokens are input to the parser * Parser builds on the distinctions between tokens --- # How to Design a Lexer - Step 1 Define a finite set of tokens * Tokens describe all substrings of interest * Choice of tokens depends on language, design of parser --- # Example For our simple Scheme program: ``` (+ 3 4) ``` We could identify the useful tokens as: ``` OPENPAREN ADDOP INTEGER CLOSEPAREN ``` --- # How to Design a Lexer - Step 2 Describe which strings belong to each token * Integer: a non-empty string of digits * Operator: "+", "-" ... * Whitespace: a non-empty sequence of spaces, tabs, newlines... --- # How to Implement a Lexer An implementation must do two things 1. Recognize substrings corresponding to tokens 2. Also capture the value or _lexeme_ of the token For example, the token `ADDOP` corresponds to the lexeme "`+`". --- # How to Implement a Lexer * The goal is to partition the entire input string (the program) * Reading left-to-right, recognizing one token at a time * Discard tokens irrelevant to parsing, like comments and whitespace --- # Lookahead * Looking at one character at a time isn't always enough ``` < <= ``` * Is that `LTOP` followed by `ASSIGNOP`? No, it's `LEOP`. ``` i if ``` * Is that a variable `i` followed by a variable `f`? No, it's the `if` keyword. * Lookahead may be required to decide where one token ends and the next token begins --- # How to Implement a Lexer * You can write a lexer by hand * 3.2 (Watson) has a detailed example * Modern compilers use lexer generators * Useful to know how they work --- # Regular Languages * There are several formalisms for specifying tokens * Regular languages are the most popular * Simple and useful theory * Easy to understand * Efficient implementations --- # Languages **Definition.** Let ∑ be a set of characters. A _language over_ ∑ is a set of strings of characters drawn from ∑. Example 1: * Alphabet = English characters * Language = English sentences * Not every string of English characters is an English sentence Example 2: * Alphabet = ASCII characters * Language = C programs * Not every string of ASCII characters is a C program --- # Regular Expressions * Languages are sets of strings * Need some notation for specifying which sets we want * The standard notation for regular languages is _regular expressions_ --- # Atomic Regular Expressions * Single character > 'c' = {"c"} * Empty string > ε = {""} --- # Compound Regular Expressions * Union > A | B = { s : s ∈ A or s ∈ B } * Concatenation > AB = { ab : a ∈ A and b ∈ B } * Iteration > A\* = { s : repeated 0 or more times } > B+ = { s : repeated 1 or more times } --- # Example Integer: a non-empty string of digits > digit = '0 ' | '1' | ' 2 ' | '3' | ' 4 ' | '5' | '6 ' | '7 ' | '8' | '9 ' > integer = digit+ --- # Example Identifier: string of letters or digits, starting with a letter > letter = ‘A’ | ... | ‘Z’ | ‘a’ | ... | ‘z’ > identifier = letter (letter | digit)\* Question: Would this have the same result? > identifier = letter (letter\* | digit\*) --- # Example Email address: anyone@vassar.edu > name = (letter | digit)+ > domain = letter+ > email = name '@' name '.' domain --- # Regular Expressions * Regular expressions are simple, but useful * A regular expression R describes some set of strings L(R) * L(R) is the "language" defined by R * If a string _s_ is valid in a language, we say _s_ ∈ L(R) * Regular languages are a specification, we need an implementation --- # Regular Expressions ⇒ Lexer * (1) Write a regular expression for the lexemes of each token: > INTEGER = digit+ > IDENTIFIER = letter (letter | digit)\* > OPENPAREN = '(' * (2) Construct R from all lexemes for all tokens: > R = INTEGER | IDENTIFIER | OPENPAREN | ... * (3) Take a substring of the input, _s_. Check that _s_ ∈ L(R). * (4) If success, remove _s_ from the input, and repeat (3). --- # Ambiguities * How much input is used? ("<" vs "<=") * Rule: pick longest possible string in L(R) * Which token is used? ("if" keyword, not identifier) * Rule: pick the one defined first --- # Next Lecture * Lexer generator tools for the project * Brief introduction to Python * Release first assignment and first project milestone