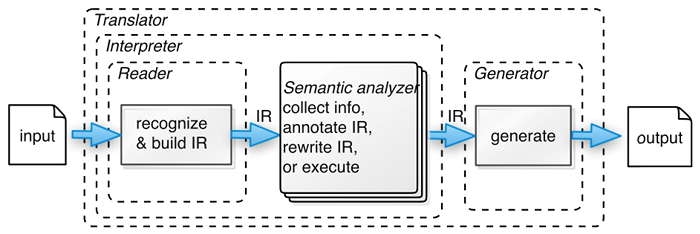

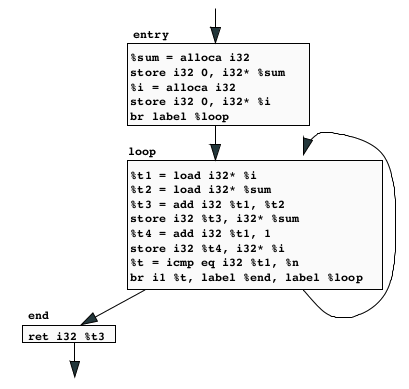

class: center, middle # Optimization _CMPU 331 - Compilers_ --- # Recap: Stages of Compilation 1. Lexical Analysis 2. Parsing 3. Semantic Analysis 4. Optimization 5. Code Generation  (source: _Language Implementation Patterns_ by Terence Parr) --- # Overview Optimization seeks to improve a program's resource utilization * Execution time (most often) * Code size * Memory usage * Network messages sent, etc. Optimization should not alter what the program computes * The answer must still be the same --- # Cost of Optimizations In practice, a conscious decision is made not to implement the fanciest optimizations known. Why? * Some optimizations are hard to implement * Some optimizations are costly in compilation time * Some optimizations have low benefit * Many fancy optimizations are all three Goal: Maximum benefit for minimum cost --- # Basic Blocks A basic block is a maximal sequence of IR instructions with: * no labels (except at the first instruction), and * no jumps (except in the last instruction) Idea: * Cannot jump into a basic block (except at beginning) * Cannot jump out of a basic block (except at end) * A basic block is a single-entry, single-exit, straight-line code segment Example: ``` loop: %1 = icmp sgt i32 %x, 0 br i1 %1, label %body, label %end body: ; do something repeatedly br label %loop end: ``` --- # Control-Flow Graph A control-flow graph is a directed graph with: * Basic blocks as nodes * An edge from block _A_ to block _B_ if the execution can pass from the last instruction in _A_ to the first instruction in _B_ * the last instruction in _A_ is a jump to the entry label in _B_ * execution can fall-through from block _A_ to block _B_ * There is one initial node * All `ret` nodes are terminal --- # Control-Flow Graph The body of a function (or method) can be represented as a control-flow graph.  _(source: "Code generation for LLVM" by Magnus Myreen)_ --- # Classification of Optimizations For languages like C, there are three granularities of optimizations. 1. Local optimizations * Apply to a basic block in isolation 2. Global optimizations * Apply to a control-flow graph (for a function/method body) in isolation 3. Inter-procedural optimizations * Apply across function/method boundaries Most compilers do (1), many do (2), few do (3) --- # Local Optimizations * The simplest form of optimizations * No need to analyze the whole procedure body * Just the basic block in question --- # Algebraic Simplification Some statements can be deleted ```c x = x + 0 x = x * 1 ``` Some statements can be simplified ```c x = x * 0 => x = 0 y = y ^ 2 => y = y * y x = x * 8 => x = x << 3 x = x * 15 => t = x << 4; x = t - x ``` (on some machines `<<` is faster than `*`, but not on all!) --- # Constant Folding Operations on constants can be computed at compile time * If there is a statement `x = y ? z` * And `y` and `z` are constants * Then `y ? z` can be computed at compile time Examples: ``` x = 2 + 2 => x = 4 if (2 < 0)... => can be deleted ``` _(Note: cross-compilation requires care to ensure that arithmetic operations in the compiler have exactly the same behavior as in the target architecture. Especially for floating point operations, where precision varies by target architecture.)_ --- # Flow of Control Optimizations Eliminate unreachable basic blocks * Code that is unreachable from the initial block (basic blocks that are not the target of any jump or "fall through" from a conditional) * Why would such basic blocks occur? * Removing unreachable code makes the program smaller * And sometimes also faster (due to memory cache effects, improved spatial locality) --- # Single Assignment Form Some optimizations are simplified if each register occurs only once on the left-hand side of an assignment * Rewrite intermediate code in _single assignment form_ ```c x = z + y b = z + y a = x => a = b x = 2 * x x = 2 * b ``` (b is a temporary register) --- # Common Subexpression Eliminination * If a basic block is in single assignment form * And definition `x = ?` is the first use of `x` in a block * Then, when two assignments have the same right-hand side, they compute the same value. ```c x = y + z x = y + z ... => ... w = y + z w = x ``` (the values of `x`, `y`, and `z` do not change in the ... code) --- # Copy Propagation * If `w = x` appears in a block, replace subsequent uses of `w` with uses of `x` * Assumes single assignment form Example: ```c b = z + y b = z + y a = b => a = b x = 2 * a x = 2 * b ``` * Only useful for enabling other optimizations * Constant folding * Dead code elimination --- # Copy Propagation and Constant Folding Example: ```c a = 5 a = 5 x = 2 * a => x = 10 y = x + 6 y = 16 t = x * y t = x << 4 ``` --- # Copy Propagation and Dead Code Elimination * If `w = ?` appears in a basic block * And `w` does not appear anywhere else in the program * Then, the statement `w = ?` is _dead_ and can be eliminated * "dead" means it does not contribute to the program's result Example: ```c x = z + y b = z + y b = z + y a = x => a = b => x = 2 * b x = 2 * a x = 2 * b ``` --- # Applying Local Optimizations * Each local optimization does little by itself * Typically optimizations interact, performing one optimization enables another * Optimizing compilers repeat optimizations until no improvement is possible * Or, can be stopped at any point to limit compile time --- # Example Initial code: ```c a = x ^ 2 b = 3 c = x d = c * c e = b * 2 f = a + d g = e * f ``` --- # Example Algebraic optimization: ```c a = x * x /*changed*/ b = 3 c = x d = c * c e = b << 1 /*changed*/ f = a + d g = e * f ``` --- # Example Copy propagation: ```c a = x * x b = 3 c = x d = x * x /*changed*/ e = 3 << 1 /*changed*/ f = a + d g = e * f ``` --- # Example Constant folding: ```c a = x * x b = 3 c = x d = x * x e = 6 /*changed*/ f = a + d g = e * f ``` --- # Example Common subexpression elimination: ```c a = x * x b = 3 c = x d = a /*changed*/ e = 6 f = a + d g = e * f ``` --- # Example Copy propagation: ```c a = x * x b = 3 c = x d = a e = 6 f = a + a /*changed*/ g = 6 * f /*changed*/ ``` --- # Example Dead code elimination: ```c a = x * x /*deleted*/ /*deleted*/ /*deleted*/ /*deleted*/ f = a + a g = 6 * f ``` --- # Example Final form: ```c a = x * x f = a + a g = 6 * f ``` --- # Peephole Optimizations * These optimizations work on: * Target independent intermediate code, or * Machine-specific assembly language * The "peephole" is a short sequence of (usually contiguous) instructions * The optimizer replaces the sequence with another equivalent one (but faster) * Write peephole optimizations as replacement rules Example: ``` %t = add i32 %x, 5 => %y = add i32 %x, 15 %y = add i32 %t, 10 ``` (The two instructions must be in the same basic block, to make sure the second `add` isn't the target of a jump.) --- # Peephole Optimizations * Many (but not all) of the basic block optimizations can be handled as peephole optimizations. * Like local optimizations, peephole optimizations must be applied repeatedly for maximum effect. --- # Local Optimizations * Intermediate code is helpful for many optimizations * Many simple optimizations can be applied on assembly language * "Program optimization" isn't really an accurate name * Code produced by "optimizers" is not optimal in any reasonable sense * "Program improvement" is a better name for it